Simon Sinek is right - start with 'why', here's why

Your projects should start with ‘why’. If you don’t start with ‘why’ you’ll have a really hard time making something you care about.

Read More →Hi Friends,

I'm Walt and I am a entrepreneur, engineer and marketer. I write articles about the great marketing, entreprenership, and engineering that inspires me.

Your projects should start with ‘why’. If you don’t start with ‘why’ you’ll have a really hard time making something you care about.

Read More →

I just finished reading another book about marketing. The book focused on content marketing. Here are my notes and impressions.

Read More →

3 techniques that have helped me a lot in choosing a direction when there's not a right answer.

Read More →

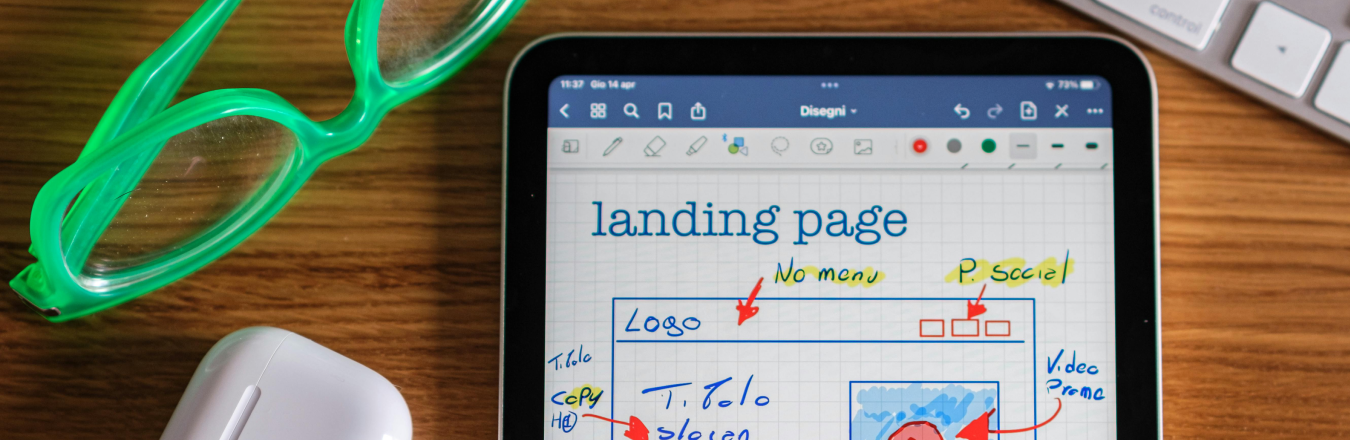

Building a consulting company landing page from scratch using AI (Cursor)

Read More →

In which an engineer (me) tries to understand "marketing" and how to "do" it. Also, a plan of action for future blog posts.

Read More →